Secure Messaging, Accessing Locked Phones, Retention of Seized Devices, Software Source Code, & More

Vol. 5, Issue 10

October 7, 2024

Welcome to Decrypting a Defense, the monthly newsletter of the Legal Aid Society’s Digital Forensics Unit. This month, Allison Young covers recent technical updates and legal turmoil in mobile messaging. Jerome Greco discusses law enforcement access to seized cell phones. Diane Akerman analyzes a recent D.C. Circuit Court decision about continued retention of cell phones. Finally, our guest columnists, Jessica Goldthwaite and Lisa Montanez, discuss DNA software source code.

The Digital Forensics Unit of The Legal Aid Society was created in 2013 in recognition of the growing use of digital evidence in the criminal legal system. Consisting of attorneys and forensic analysts, the Unit provides support and analysis to the Criminal, Juvenile Rights, and Civil Practices of The Legal Aid Society.

In the News

![Hands holding a phone in front of Gracie Mansion. The phone screen is translucent: we can see the the mansion behind it, as well as the texts, which read, “Deleting messages in 5 ... [bomb emoji] 4... [bomb emoji] 3... [bomb emoji] 2... [bomb emoji]” and are followed by a large red “X.” Hands holding a phone in front of Gracie Mansion. The phone screen is translucent: we can see the the mansion behind it, as well as the texts, which read, “Deleting messages in 5 ... [bomb emoji] 4... [bomb emoji] 3... [bomb emoji] 2... [bomb emoji]” and are followed by a large red “X.”](https://substackcdn.com/image/fetch/w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F005b4985-0916-4f56-bff3-4e4249061d21_3206x2317.jpeg)

The Insecure-Secure Messenger is Dead, Long Live Insecure-Secure Messaging

Allison Young, Digital Forensics Analyst

It’s surprisingly hard to send a private message in 2024. Telegram users, shady characters, mixed-phone friend groups, and NYC’s own mayor have been learning this the hard way as of late. The past month’s headlines included an abundance of evidence showing that we really should be suspicious about the security of our communications.

Over the last few years, the government has had a spirited back and forth with criminal enterprises when it comes to using non-standard, privacy-focused phones. A few years ago, the FBI ran, tapped, and shut down ANOM phones, their own network of encrypted messaging offered through a fake tech startup. This month, a real product out of Australia called Ghost that was NOT secretly run by the FBI was shut down. Outlets are reporting that the platform, which like ANOM required the use of a specific “secure” device, was apparently a bit slipshod from a security standpoint. Unsurprisingly, its users were hacked as a result.

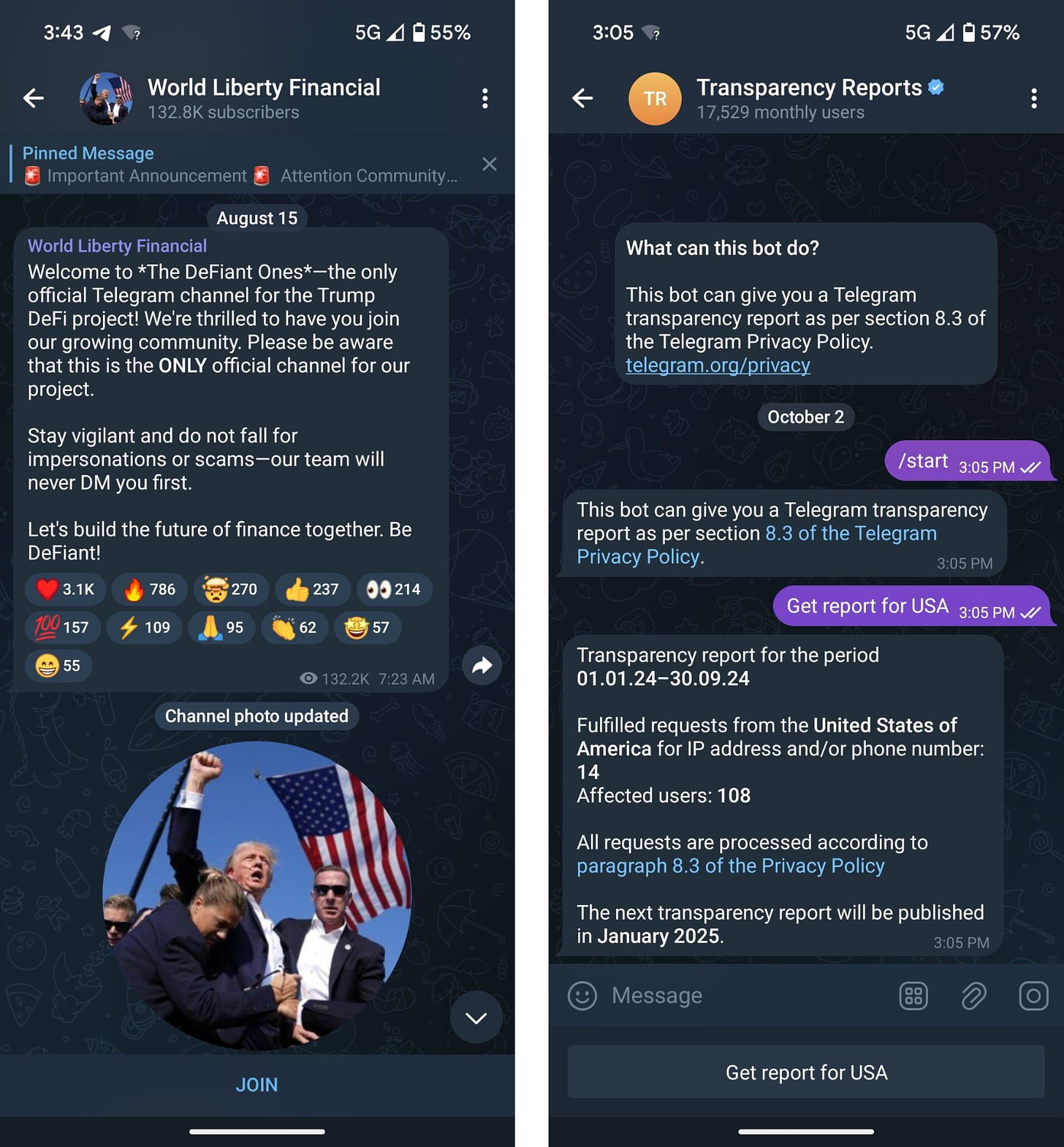

The market for apps you can use on a normal Android and iPhone is much larger and was also recently shaken by an arrest after Telegram’s CEO, Pavel Durov, was taken into custody by the French law enforcement. The app previously held a reputation for “talking the talk” about the importance of privacy, despite the fact that encryption settings were never available on every chat, nor were they enabled by default where they were available. Suspected (and confirmed) use of their platform for illegal business made Telegram a target, even if its public content was simultaneously aiding investigations.

Unlike normal text messaging or an app like Signal, Telegram’s selling point lies in its public channels and group chats, places where terrorists and hackers have been known to gather. Telegram has also built a strong relationship with the cryptocurrency industry, which itself experiences constant scrutiny due to the potential for money laundering, scams, and the trade of illegal goods. Over the past month, the platform has attempted to mitigate its legal woes by suggesting that they will start to actually moderate content and respond to valid legal requests for user data.

If you are a fan of secure messaging platforms, you may also want to pause before giving Discord a try. This platform is soon turning on end-to-end encryption to audio and video calls, but not to direct messages. The likely reason? Content moderation. While some encryption is progress, it still allows law enforcement to obtain message content with a valid legal request. Like Telegram, the partial implementation of end-to-end-encryption risks confusing users who are less tech savvy into thinking that they are making a safe choice when it comes to privacy.

Android phone owners may have been relieved to hear that they can finally talk to iPhone users with RCS, a protocol that (unlike SMS/MMS) supports encryption and will allow you to react to and group chat Android users from an iPhone. However, they too should be concerned about the privacy of their messages. While Apple is FINALLY rolling out RCS in iOS 18, they have not implemented encryption. Keep in mind that your pretty, cross-platform conversations aren’t iMessages: they’re basically texts that your carrier and others may be able to snoop on.

Even the Eric Adams indictment [PDF] news cycle was unable to escape mobile app security gossip as allegations include:

Adams learning that the FBI wanted to access his phone, changing the password, and then forgetting it

Adams allegedly indicating to a staff member/potential co-conspirator that he “always” deletes their messages (which is a nice gesture, except it seems like they may still exist)

That same person deleting messaging apps they allegedly used to chat with Adams

The Intercept said it best when they titled their article on the subject “Encrypted Apps Can Protect Your Privacy — Unless You Use Them Like Eric Adams.”

If you can see messages on your phone, there’s a good chance the government can if they get access to your phone. When you use a “secure” messaging app, make sure you understand what it does, its limitations... and what it may leave behind.

The Case of the Mayor and the Locked Phone

Jerome D. Greco, Digital Forensics Director

No, this is not a new Hardy Boys novel, but it is still a mystery. New York City Mayor Eric Adams was recently charged with bribery and campaign finance offenses, and the 57-page indictment [PDF] reveals some interesting details of his prosecution. In particular, paragraph 48(d) on page 48 of the indictment describes the seizure of the mayor’s phones and the changing of his password.

On November 6, 2023, the FBI executed a search warrant for the mayor’s electronic devices, which included two cell phones. Adams was not in possession of his personal phone at that time, but, in response to a subpoena, he turned it over to federal authorities the next day. It is unclear if the phone was on or off at the time it was provided to the FBI, but it was locked. The mayor claimed that a day before the FBI executed its search warrant that he had changed the passcode from four digits to six to prevent accidental deletion of content by his staff, and he also claimed that he had forgotten the password within those two days. Almost a year later, Assistant U.S. Attorney Hagan Scotten told federal District Judge Dale Ho that they had not yet been able to access the mayor’s personal phone.

At the time of the initial device seizures, CBS reported that the two other phones (his non-personal phones) were iPhones. There are references across a small number of articles that his personal phone may also be an iPhone, but it is unclear if that is accurate. The type of phone (make and model), the operating system version, and the length and complexity of the password all affect whether a device can be accessed or how long it will take to gain access. Similarly, whether the device had been unlocked at least once since it was last turned off and back on may determine what data, if any, federal law enforcement can extract. For example, the difference between a Before First Unlock (BFU) and an After First Unlock (AFU) iPhone extraction may be significant.

An additional issue is whether a prosecutor can have a court compel a person to provide their passcode. While most courts have found that if the government has a proper search warrant they can force people to unlock their devices with biometric locks (e.g. fingerprints and Face ID), courts across the country are split over if and when a person can be compelled to provide a passcode. Judges have routinely found that you do not have a Fifth Amendment right in your fingerprint or your face, but whether the Fifth Amendment protects an individual from being forced to utter, write down, or enter a passcode is not nearly as agreed upon. The National Association of Criminal Defense Lawyers has put together a useful primer discussing the various issues compelled decryption raises, as well as reviewing the different court decisions and theories. However, even less clear is what can happen if a person claims they genuinely forgot their passcode, as Mayor Adams has claimed.

Even if the government has used every tool in its technological arsenal to try to “break into” Mayor Adams’ personal cell phone and they have still been unsuccessful; it does not mean that it will forever remain inaccessible. The technology improves and changes rapidly, and some tools may take a while to succeed. It may just be a matter of time, and federal prosecutors seem confident they will prevail: “‘Decryption always catches up with encryption,’ Scotten said. But we don't know what we have until we can access it.’”

In the Courts

D.C. Circuit Rules on Retention of Seized Cell Phones

Diane Akerman, Digital Forensics Staff Attorney

Ten years out from the landmark Riley case, courts are still grappling with the application of the Fourth Amendment to cell phones. Cell phones may be seized pursuant to a lawful arrest, but there is often little oversight of when a seizure is re-categorized from “safekeeping” to “investigatory,” thereby making their recovery nearly impossible.

Asinor v. D.C. is a consolidated appeal from a number of cases of individuals who had been arrested by the Metropolitan Police Department (MPD), released without charges or any further prosecution, and were unable to recover their cell phones despite repeated requests. 111 F.4th 1249 (D.C. Cir 2024). The District Court reasoned that the Fourth Amendment governed the initial seizure of the devices, but that continued retention was governed by the Fifth Amendment. The District Court further reasoned that under the Fifth Amendment, D.C. Law 41(g) gave all plaintiffs adequate due process - an ironic statement considering this case was brought only after a year or more of litigation under 41(g) had yielded no results for the plaintiffs.

On appeal, the Circuit Court found that there is a “Fourth Amendment seizure if the government (1) meaningfully interferes with possessory interests in property in a way that (2) would have been understood to be an actionable property tort at common law.” Id. at 1254 .

The Circuit Court came to a few very, you might say, reasonable, conclusions. First, they acknowledged the real, practical harm caused by depriving someone of their cell phone - the financial harm to replace it (cell phones, still expensive!), as well as lost access to important information like passwords, photographs, and contact information. The discussion is notable in that most courts pay lip service to Riley’s proclamations about the importance of cell phones, right before explaining why the government still has a right to invade your privacy. Second, the Court vehemently disagreed with the lower court’s finding that a “seizure” is a “single act” rather than a “continuous act” - a semantic argument that allowed the district court to find the Fourth Amendment did not apply to continued retention. Id. at 1257. Third, they found that (obviously) many constitutional provisions can apply to a single act, unlike the district court who believed that only the Fifth Amendment applied to continued possession and the Fourth only to the “single act” of the seizure. Id. at 1259.

A particularly important takeaway from this case though, is the discussion of D.C. Law 41(g) - which provides for the “due process” an aggrieved person can utilize to move for return of their property. The court was “reluctant to reduce this entirely to a question of D.C. law, which would eliminate substantive constitutional protection for the property rights at issue.” Id. at 1259.

As those who practice in New York City and are familiar with Rule 12-34 of the Rules of the City of New York are well aware, these administrative city laws are often woefully inadequate at providing any real mechanism for recovering property, and there are little to no practical enforcement mechanisms. Extending real Fourth Amendment protections and jurisprudence to these situations would go a long way towards securing the return of individual property - a task that at the moment is often impossible.

Expert Opinions

We’ve invited specialists in digital forensics, surveillance, and technology to share their thoughts on current trends and legal issues. This month’s article is a collaboration between guest columnists from The Legal Aid Society: Jessica Goldthwaite, staff attorney with the DNA Unit, and Lisa Montanez, paralegal II with the Digital Forensics Unit.

This does not compute: Why isn’t forensic software adequately tested?

In recent years, there have been significant technical and computing advances in numerous forensic fields. One such example is forensic DNA analysis, where the development of increasingly sensitive methods able to detect smaller and smaller amounts of DNA has meant analysts are now facing significantly more complex, low-level mixtures, as opposed to DNA from just one person in plentiful amounts. As a result, labs turned to software to interpret these profiles. The use of probabilistic genotyping (PG) software to analyze DNA samples has become an integral part of routine case work in many if not most forensic DNA labs.

PG software developers have marketed their products by touting their ability to reliably analyze complex samples based on their developmental testing. Prosecutors rely on that testing to demonstrate reliability, as well as validation studies performed by laboratories who purchased the software, and whose primary customers are law enforcement. But how much does that claim mean in the absence of independent, adversarial testing? The same question could be asked of a number of software programs used to generate evidence for a criminal proceeding such as COMPAS (a recidivist prediction tool) and ShotSpotter.

Developers of software used in the criminal justice system often resist sharing their software, the source code and the data used to develop the programs with the defense to test these claims. They often invoke trade secrets to shield disclosure of software code, as well as other materials critical for an adequate review of the program such as training data, validation materials, and user manuals. A main component of their argument is the assertion of trade secrets as an evidentiary privilege to limit disclosure in court, of some or all, of the inner workings of their programs on the basis that it will harm its business interests. If it seems constitutionally problematic that a company would get to shield disclosure to a defendant in a criminal trial to protect business interests, it is. A client’s due process rights shouldn’t be trumped by a business interest in maintaining trade secrets [PDF].

In the case of PG, the developers of the most widely used systems in the United States, STRmix and TrueAllele, currently provide for the review of source code and other materials under non-disclosure agreements. But NDAs can be overly restrictive and prohibit meaningful review.

In State v. Pickett, 466 N.J. Super. 270 (2001), the court held that the defense was entitled to trade secrets, namely the source code and other validation documentation for the PG program TrueAllele, in order to cross examine the State’s witness at a Frye hearing. The court rejected the trade secrets claims the developer raised and held that the appropriate remedy to protect intellectual property interests of the developer while balancing that against the rights of the accused was a protective order that provided for a meaningful inspection by the defense (e.g., not relegated to pen and paper). This was contrary to the onerous terms in the NDA demanded by the software developer. The court established a four-part test to determine if the defendant has established a particularized showing of need for the material and found that Pickett had satisfied it. Id. at 279.

Unfortunately, People v. Wakefield, 38 N.Y.3d 367 (2022), held that the trial court did not commit error in denying Wakefield’s request for discovery of the source code for a Frye hearing or for cross-examination purposes at trial. The defense did not attempt to subpoena the code or make a further motion demonstrating a particularized need, and the trade secret issue was not squarely before the court. However, in her concurrence, Judge Rivera insisted that the trial court erred in ruling that TrueAllele was generally accepted without defense and third-party access to the source code, highlighting that at the time of trial the “source code and underlying algorithms were kept from independent evaluators and the defense as trade secrets.” Id. at 387. She reasoned that, “[b]ecause there was no opportunity for members of the relevant scientific community to review the source code, and specific software using a complex algorithm cannot be deemed reliable in the scientific community without an independent review of how the software reaches its conclusions—including the inferences made by artificial intelligence—the prosecution failed to satisfy its burden at the Frye hearing.” Id.

As Rivera’s concurrence recognizes, scrutiny by scientists independent of the developers is key and while a recent concept for forensic scientists, is nothing new for software developers. The concept of independence forms the backbone of software engineering standards developed by the Institute of Electrical and Electronics Engineers (IEEE), the world’s largest organization of software developers and computer scientists. Independent Verification & Validation (IV&V) requires an external independent review: IV&V is “performed by an organization that is technically, managerially, and financially independent of the development organization.” (emphasis added). Scientists and advocates have made numerous calls for requiring forensic DNA and other forensic software to comply with IEEE standards and IV & V.

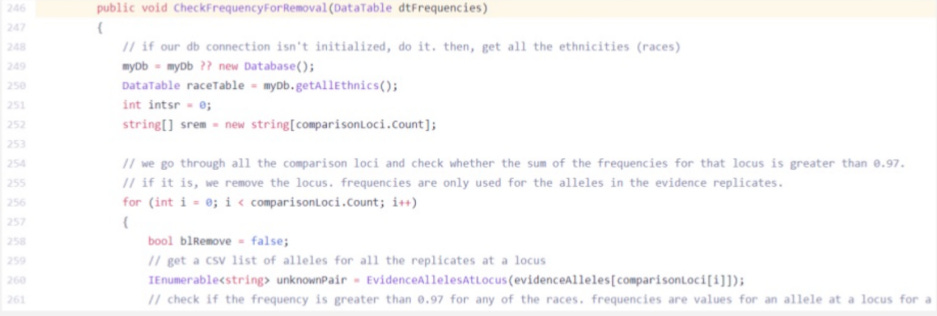

As United States v. Johnson illustrated, independent review of software code can uncover flaws. The federal defenders in Johnson convinced the court to order disclosure of the source code for the OCME’s homebrew DNA analysis program Forensic Statistical Tool to the defense. Retained expert Nathan Adams conducted the very first review of the code performed by anyone independent from the lab and discovered a function which when triggered by certain circumstances discarded data, including data favorable to the defense.

A snippet of the function that Nathan Adams discovered in the FST source code appears below:

Well-respected scientific researchers know and understand the importance of subjecting their results to independent review. In fact, they welcome it. In the field of forensics, this should be advocated for fiercely as the consequences of faulty forensics may deprive a person of life and liberty. The push for transparency of PG software and independent, adversarial testing is a step toward ensuring that along with scientific advances we are also not empowering the systemic injustices that continue to plague our criminal legal system.

Jessica Goldthwaite (she/her) has been a staff attorney with the DNA Unit of the Legal Aid Society since its founding in 2013. She assists, trains, and educates criminal defense attorneys and clients in DNA matters, and advocates for forensic DNA-related reforms and policy initiatives. Before joining the DNA Unit, Jessica was a staff attorney in the Brooklyn trial office of Legal Aid for seven years.

Lisa Montanez (she/her) is a paralegal II with the Legal Aid Society’s Digital Forensics Unit. She has a MS in Forensic Science from the University of California, Davis where she studied and developed a method to identify fentanyl analogs. Prior to joining the Digital Forensics Unit, she provided her scientific expertise pro bono to the Legal Aid Society’s DNA unit and has worked in a number of roles in private industry and government including as a pharmaceutical scientist, forensic scientist, and regulatory professional.

Upcoming Events

October 7-8, 2024

Artificial Justice: AI, Tech and Criminal Defense (NACDL) (Washington, DC)

October 14-15, 2024

Artificial Intelligence & Robotics National Institute (ABA) (Santa Clara, CA)

October 15-18, 2024

2024 International User Summit (Oxygen Forensics) (Alexandria, VA)

October 17, 2024

EFF Livestream Series: How to Protest with Privacy in Mind (Virtual)

Artificial Intelligence “Level Setting” for Attorneys: The Essentials of AI, Responsible Use, and Privacy (NYCLA) (Virtual)

October 19, 2024

BSidesNYC (New York, NY)

October 31, 2024

Data Privacy on Trial – A Comparative Analysis of Enforcement, Damages, Sanctions, and Standing in EU and U.S. Law (Berkeley Center for Law & Technology) (Berkeley, CA)

November 18, 2024

The Color of Surveillance: Surveillance/Resistance (Georgetown Law Center on Privacy and Technology) (Washington, DC)

November 18-20, 2024

D4BL III (Data for Black Lives) (Miami, FL)

December 5, 2024

Medical Police, Forensics, and Data-Driven Policing (NACDL) (Washington, DC)

March 24-27, 2025

Legalweek New York (ALM) (New York, NY)

April 24-26, 2025

2025 Forensic Science & Technology Seminar (NACDL) (Las Vegas, NV)

Small Bytes

Did an AI write up your arrest? Hard to know (Politico)

We Hunted Hidden Police Signals at the DNC (Wired)

Cops lure pedophiles with AI pics of teen girl. Ethical triumph or new disaster? (Ars Technica)

Apple partners with third parties, like Google, on iPhone 16’s visual search (TechCrunch)

A courts reporter wrote about a few trials. Then an AI decided he was actually the culprit. (Nieman Lab)

FTC Announces Crackdown on Deceptive AI Claims and Schemes (Federal Trade Commission)

Many cameras. Little focus. Blurry results. (Chicago Tribune)

Adams aide — and a close personal friend — pushed city officials to hire tech firm (Politico)

Cops Battle Data Brokers for Privacy in Constitutional Clash (1) (Bloomberg Law)

License Plate Readers Are Creating a US-Wide Database of More Than Just Cars (Wired)

DOI probing NYC Mayor Adams administration over Evolv subway weapons scanner technology: sources (NY Daily News)