Surveillance of NYCHA Residents, Facial Recognition False Arrest, Karen Read Trial Evidence, Deleted Messages & More

Vol. 6, Issue 9

September 8, 2025

Welcome to Decrypting a Defense, the monthly newsletter of the Legal Aid Society’s Digital Forensics Unit. This month, Laura Moraff talks about NYPD’s surveillance of NYCHA residents, Diane Akerman discusses another wrongful arrest based on unreliable facial recognition technology, Lisa Brown examines the digital evidence presented in the Karen Read case, and Allison Young answers a question about deleted data.

The Digital Forensics Unit of The Legal Aid Society was created in 2013 in recognition of the growing use of digital evidence in the criminal legal system. Consisting of attorneys and forensic analysts, the Unit provides support and analysis to the Criminal Defense, Juvenile Rights, and Civil Practices of The Legal Aid Society.

In the News

NYPD Secretly Spying on NYCHA Residents

Laura Moraff, Digital Forensics Staff Attorney

Reliable access to Internet is crucial in today’s world. In 2022, New York City’s Office of Technology and Innovation (OTI) partially replaced the de Blasio administration’s Internet Master Plan—a program that relied on local Internet providers to ensure that NYCHA residents had broadband access—with Big Apple Connect. Big Apple Connect purportedly served the same goal, but it relied on larger service providers instead of smaller, local ones. The program was pitched as a way to help narrow the digital divide.

The City also had other goals for Big Apple Connect, which included using the program to expand police surveillance of public housing and those who live in it. New York Focus recently discovered that, from the program’s initiation, OTI intended to “leverage Big Apple Connect connections to pull orphaned camera systems into the NYPD’s domain awareness system.” And in fact, in July of this year, an NYPD spokesperson told New York Focus that the program allows the NYPD to access live feeds of NYCHA cameras and view footage from the past 30 days. The NYPD is able to access the footage without getting NYCHA’s permission.

NYCHA currently has more than 20,000 cameras monitoring entryways, hallways, laundry rooms, lobbies, and courtyards. The NYPD reportedly plans to connect video cameras at 20 NYCHA developments to its Domain Awareness System by the end of this year, though it has declined to disclose which developments. The NYPD has not said whether it intends to expand beyond those 20 sites.

We cannot accept indiscriminate police surveillance of people’s homes as a condition on living in public housing or gaining Internet access. On August 25, New York City Councilmembers Jennifer Gutiérrez, Yusef Salaam, and Chris Banks sent a letter to the Adams administration demanding a halt to any expansion of the NYPD’s access to NYCHA cameras using the Big Apple Connect program. The letter emphasized OTI’s failure to disclose the surveillance component of Big Apple Connect, despite having many opportunities to do so in Council testimony, contract materials, and public dashboards. The Councilmembers also demanded transparency with respect to data-sharing and adoption of new surveillance technologies. We wholeheartedly support the letter’s demands and hope this surveillance will go no further.

Oops, Facial Recognition Did It Again

Diane Akerman, Digital Forensics Staff Attorney

The New York Times reported on the case of Trevis Williams, a Brooklyn man who was wrongfully arrested based on a faulty facial recognition technology (FRT) match. The misidentification came to light when Mr. Williams’ attorney referred the case to the Digital Forensics Unit (DFU) to confirm his alibi. The case highlights the particularly pernicious problems not only with FRT, but with how the NYPD’s use exacerbates those problems.

To find evidence of Mr. Williams’ location at the time of the incident, DFU started with the two most reliable and accessible places to find location information – data from Mr. Williams’ cell phone, and historical cell site location information (HCSLI) obtained directly from his cell service provider.

Senior Analyst Lisa Brown conducted an extraction of Mr. Williams’ phone to look for any location evidence. A search of the phone found a photo of a car GPS screen. Mr. Williams had taken the photo and texted it to his friend – it showed his location, and an ETA of 3:40pm in Brooklyn. Metadata confirmed the time and date the photo was taken. Senior Analyst Brandon Reim mapped the HCSLI, which showed Mr. Williams’ path from Connecticut, where he was working at the time, providing further evidence of the time and date of the photo. The HCSLI placed Mr. Williams around Marine Park, Brooklyn before, after, and during the incident.

If Mr. Williams was in Brooklyn – 12 miles away – at the time of the incident, how did he find himself charged with a crime he didn’t commit? Enter facial recognition.

The detective in this case submitted the surveillance video to the NYPD Facial Identification Section (FIS), and also immediately to the NYPD Special Activities Unit of the Intelligence Division (SAU). Section 212 – 129 of the NYPD Patrol Guide [PDF] outlines the protocols for use of FRT – a protocol that envisions use of FRT nearly exclusively by the FIS unit and provides limited guardrails and documentation requirements. Yet, in this relatively run of the mill misdemeanor, without any explanation or reasoning, the surveillance video was sent to the Intelligence Bureau. FIS did not find a possible match. The Intelligence Bureau, on the other hand, did.

Why wasn’t a match identified by FIS? Why did SAU get a match when FIS didn’t? Could they be using a different, potentially prohibited, photo repository? Do they have a lower threshold for identifying a possible match? FIS officers are not required to keep any documentation about their process when they conclude there is no match. SAU doesn’t even preserve the bare minimum FIS does in the case of a match – the first eight “candidates” returned by the system.

The NYPD’s policy makes clear that a possible match identified using FRT cannot be the basis for probable cause and investigators are supposed to continue, you know, investigating. The victim told officers she had seen the individual in the area before. Did the police canvass the area at any point? The individual in the surveillance video was wearing an Amazon jacket, using the Amazon delivery app. Did the officer contact Amazon for records of employment to see who may have been working in the area that day, or to find out if Mr. Williams was an amazon employee at the time (he wasn’t)? Did they check Mr. Williams address to see if he lived or worked or frequented the area for some reason?

Instead, the police chose what ACLU attorney Phil Mayor aptly described as “The pipeline of ‘get a picture, slap it in a lineup.’” The victim was shown a photo array with Mr. Williams’ picture and that of five other men who bore almost no resemblance other than also being black men with a similar hairstyle. When the victim selected Mr. Williams, she was simply confirming, as FRT had, that Mr. Williams “looks the most similar” to the person in the video. The detective activated a probable cause I-card. No additional investigation was undertaken, not even an attempt to locate and arrest Mr. Williams.

This process exemplifies the problem with reliance on FRT.

FRT does not claim to find a match. It finds “possible matches,” from a limited repository typically containing only mugshots, who most resemble the probe photo and it’s not – particularly in the case of black men – very good at it. When you consider that, after decades of over policing black communities and the structural racism of the criminal legal system, black men are overwhelming represented in mugshot databases this presents a very real problem. That problem is then compounded by an officer – a non-eyewitness – “visually” determining which individual they also believe looks most similar to the probe photo. The problem with eyewitness identification is well-documented, the problem increases tenfold when a non-eyewitness [PDF] is asked to identify a person they have never seen other than in surveillance video.

Many states have acknowledged the enormous problems with this exact process. Indiana and Detroit have laws and guidelines prohibiting law enforcement from conducting ID procedures based solely on FRT results. Montana and Utah require warrants to even use FRT in the first place. Many states require regular auditing, oversight, and reporting. The NYPD, meanwhile, maintains a five-year-old website that simply lists the number of possible matches identified, nothing about whether those matches ultimately led to arrests or convictions, and a now proven false claim that, “The NYPD knows of no case in New York City in which a person was falsely arrested on the basis of a facial recognition match.” It is easy to stand by this claim if you simply refuse to accept evidence to the contrary.

When Mr. Williams found himself in that interrogation room at the 13th precinct, his insistence that he was not the man in the surveillance images fell on deaf ears. His plea for officers to get Amazon records was ignored. They told him, “we could do all that but it wouldn’t mean anything . . . your lawyer and the DA’s office will work it out in court.” When presented with incontrovertible location evidence, the prosecution insisted they could go forward with just the identification from the victim.

The moment facial recognition entered this investigation the damage was done. The claim that this is a single circumstance of bad police work or a unique circumstance is willful naïveté. Without this dangerous technology Mr. Williams, and dozens of others, would not have been wrongfully arrested, charged with crimes they didn’t commit, hoping the truth would somehow find its way into the courtroom.

In the Courts

2:27 AM or 6:24 AM? Experts Clash Over Timing of Google Search in Karen Read’s Murder Trial

Lisa Brown, Senior Digital Forensics Analyst

On January 29, 2022, Boston police officer John O’Keefe was found dead in the snow outside the home of fellow officer Brian Albert in Canton, Massachusetts. O’Keefe’s girlfriend, Karen Read, had dropped him off at the house around midnight, where a group had gathered after a night of drinking. Early that morning, Read and two friends returned to look for O’Keefe, found his body in the snow, and called emergency services. Read was later charged with manslaughter and leaving the scene of a collision resulting in death.

Prosecutors alleged that Read killed O’Keefe by backing into him with her SUV after dropping him off. Witnesses inside the house claim that O’Keefe never came inside. The defense argued that O’Keefe was attacked in the house, dragged outside and left to die in the cold. The defense claimed that the officers involved in the incident had the opportunity to frame Read.

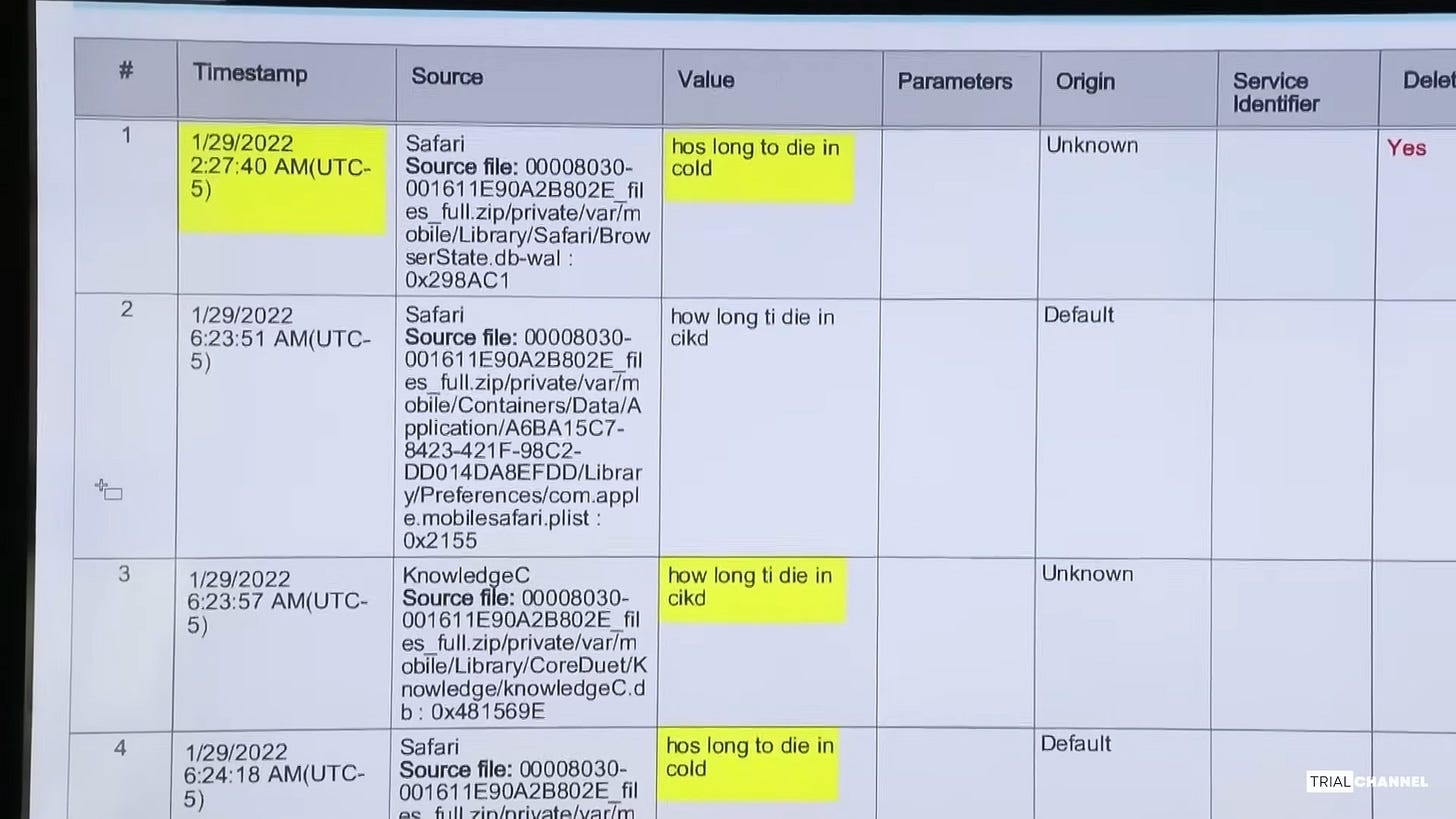

A variety of experts testified on both sides including accident reconstruction experts, medical examiners and digital forensics experts. One heavily contested piece of evidence was a misspelled Google search for “hos long to die in cold,” found on the iPhone of Jennifer McCabe. McCabe is the sister-in-law of the homeowner, Brian Albert. She was at the Albert residence the evening that O’Keefe died and was with Read the following morning when they found the body.

Defense expert Richard Green testified that the Google search on McCabe’s phone occurred on January 29th at 2:27 AM. This was hours before O’Keefe’s body was found, suggesting McCabe knew he was outside and injured well before the body was found, implicating her in a cover-up.

Prosecutor’s expert Ian Whiffin testified that the Google search occurred on January 29th at 6:24 AM, just after O’Keefe’s body was discovered. This timing suggested that the Google search was done out of frantic concern for the unresponsive victim.

How could two digital forensics professionals analyze a phone extraction and reach different conclusions about the time of an internet search? Digital artifacts are not always as straightforward as they seem. Apple’s operating system (iOS), which controls how data is accessed and stored on iPhones, is not open source. Apple also does not provide public documentation on the structure of its internal databases. As a result, analysts don’t always know where data is stored, how it’s formatted, or what Apple’s internal naming conventions mean within a database.

To work around this, digital forensics companies like Cellebrite develop tools that extract files from mobile devices and attempt to reconstruct the data in a way that approximates how it appeared on the phone. The following defense exhibit is a snippet of a Cellebrite report shown at trial. It shows “Searched Items” that Cellebrite parsed from Jennifer McCabe’s iPhone extraction. The artifacts are drawn from several files including Safari’s History.db (which logs visited URLs), Safari’s BrowserState.db (which contains browser tab data), knowledgeC.db (which tracks app usage) and Safari’s mobilesafari.plist (which contains browser settings and recent search terms).

In this case, the contested artifact was found in Safari’s BrowserState.db WAL (Write Ahead Log) file. A WAL file temporarily stores new or modified data before it’s committed to the database.

Defense expert Richard Green testified that in order to understand how Jennifer McCabe’s phone handled internet activity, he analyzed the extraction and searched for artifacts connected to specific websites. He found in each case that the timestamp in BrowserState.db was the final entry associated with a given URL. Based on this pattern, he concluded that on this particular phone and iOS version, the BrowserState.db timestamp reflects the latest possible time the web activity could have occurred - meaning the search happened at or before 2:27 AM.

Green also testified that the search was deleted by the user. He said Cellebrite flagged it as a user deletion and he was not aware of any system processes on the phone that would have caused the deletion.

Prosecution witness Ian Whiffin, a Cellebrite employee, testified that timestamps in BrowserState.db can be easily misinterpreted. He explained that the 2:27 AM timestamp actually reflects the time that the browser tab last “took focus” - meaning it was either opened or switched to - not when the search was performed. Minimizing Safari or reopening Safari does not update this timestamp, but fully closing the app or restarting the phone will cause the tab to reload and the timestamp to update.

Whiffin clarified that when a Safari tab’s session data is updated in BrowserState.db, the URL field reflects the most recent website visited in that tab, but the timestamp does not necessarily correspond to that visit. In this case, when the Cellebrite software gathered all “Searched Items” from McCabe’s phone extraction, it included the search term “hos long to die in cold” from BrowserState.db along with the timestamp the tab last took focus (2:27 AM). Whiffin stated the actual search occurred at 6:24 AM based on the timestamps found in Mobilesafari.plist and knowledgeC.db. Whiffin shared that Cellebrite recognized that the timestamp in BrowserState.db is misleading and they have since removed it from analyzed results in newer versions of their software.

Whiffin also addressed the fact that the search in the BrowserState.db WAL file is a deleted record. He explained it was likely deleted during an automatic system cleanup, not by the user. Whiffin said that if a user manually deletes internet history items in Safari, gaps appear in the History.db file. He states that Jennifer McCabe’s History.db file showed no gaps, suggesting there was no user-deleted Safari activity during the relevant timeframe.

Which expert do you think got it right?

Karen Read’s first trial in 2024 ended in a hung jury. Her second trial in 2025 resulted in an acquittal of all major charges. She was convicted only of operating under the influence and received a sentence of one year of probation.

Ask an Analyst

Do you have a question about digital forensics or electronic surveillance? Please send it to AskDFU@legal-aid.org and we may feature it in an upcoming issue of our newsletter. No identifying information will be used without your permission.

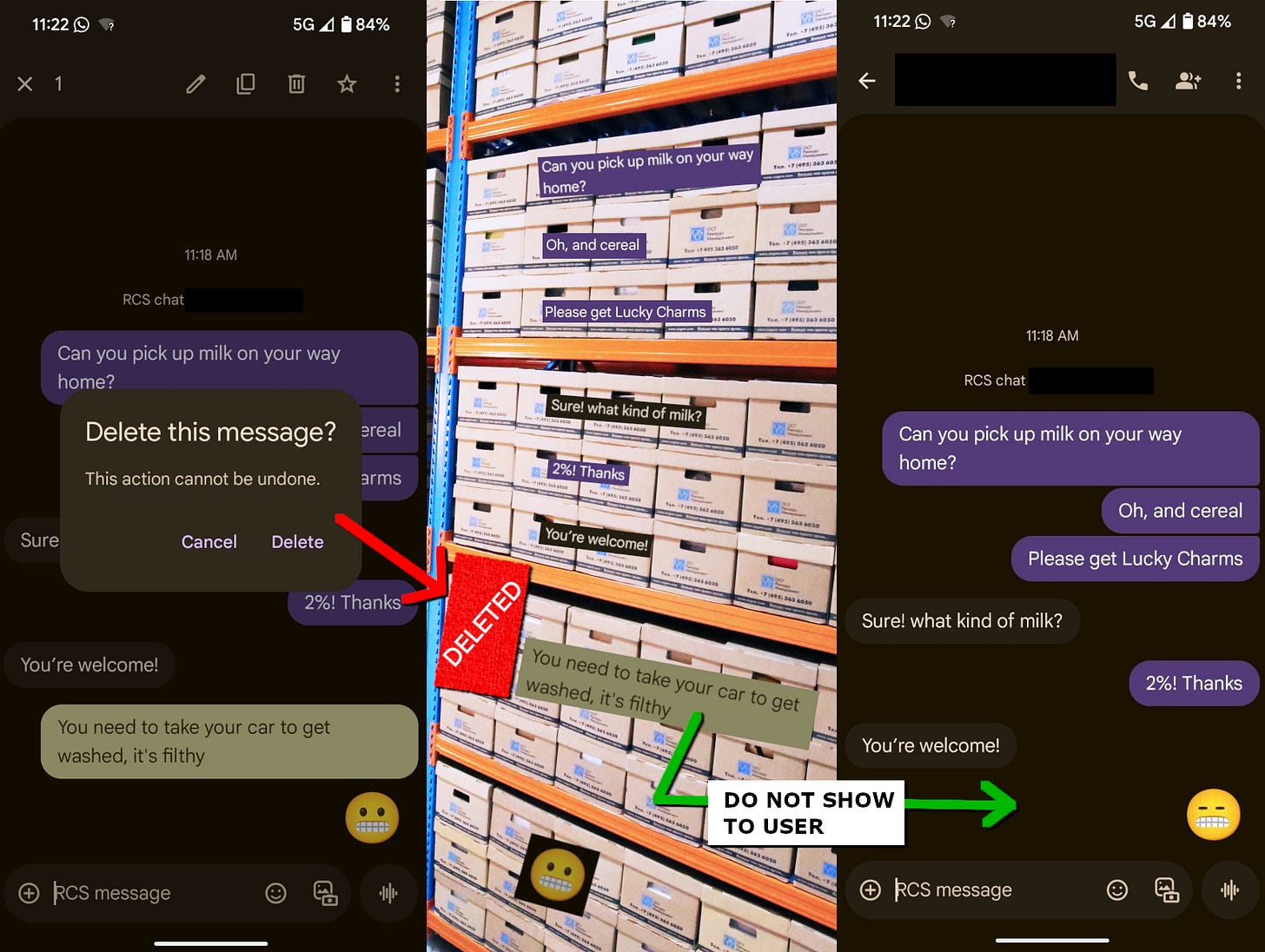

Q. When I delete a message from my phone, what actually happens to the data?

A. Text messages are usually stored in a database, which is like a warehouse for data. It organizes and maintains message content and information about that message, such as when it was sent or received.

A successfully deleted message will either be “soft-deleted” or “hard-deleted” from a database. A soft-delete will flag a message, and that flag tells the app not to display that message to the user. The message content and metadata are still saved in the phone’s storage alongside the other messages, but will not be visible by someone operating the phone itself. You might compare this to a product recall in a warehouse, where goods are no longer offered to the customer but still sit on a shelf.

A hard-delete will clear out the entire record, including its metadata. It can leave behind a gap indicating that a message was once there. For example, if you looked at the message database, you might see messages with IDs 1, 2, and 4 and then ask: “what happened to message entry #3?” Apps built with privacy in mind may choose to hard-delete message content instead of storing the data.

The above only addresses two simplified deletion events. Deleting a message from your phone may result in several different outcomes depending on how it happened.

Did you delete a message on your iPhone from iMessage? If that occurs, the message is “moved” into the Deleted Messages folder on your phone for 30 days (soft-delete), then wiped after the timer runs out (hard-delete).

If you select the option to “delete for everyone” on a message in Signal, the app should send an encrypted message to other participants that, once read by the phone, deletes their copy of that item. However, this request can be ignored if you are communicating using Signal-JW, a “fork” (modified version) of the main Signal app.

In Microsoft Teams, deleted content is copied into various temporary folders in the cloud, sometimes being held for years if there is a litigation hold in place.

Messages may be hard-deleted without a specific action by the user if they have enabled scheduled message expiration or disappearing messages.

Q. Will forensic tools be able to recover it?

A. If we return to the metaphor of a warehouse where deleted messages are product recalls, they may be flagged in the inventory (soft-delete) first, but are eventually thrown away and no longer tracked (hard-delete). For a soft-delete, all the forensic tool needs to do is fetch the message content from its expected location. The content is still in the phone’s “message warehouse,” after all.

There is still a chance of recovery when a message has been thrown away through hard-deletion. When a hard-deleted message is removed from a database, that data may not be immediately wiped. If the message were a product in a warehouse, it might be sitting on a shelf in a trash bag waiting to be taken out – but not prioritized until there is another product that needs to be stored there. Some databases have “Auto Vacuuming” set for the most rapid destruction of deleted content, just as some warehouses might have a policy to destroy trash immediately instead of letting it collect. A forensic tool could check a “free list” to identify areas of the database that are marked as empty (or free to use) and display any messages sitting in a free page like trash waiting to be disposed.

Additionally, changes to a SQLite database are generally added to a “Write-Ahead Log” (-WAL file) before the main database is updated. Forensic tools may identify both the deleted records and any newer data in this file, as it’s like a draft area for database changes. That draft area is temporary – once changes are committed to the main database, the log is cleared.

The quality of any recovered data may vary from pristine artifacts, with all content and metadata intact... to a few partial phone numbers or keywords, the digital equivalent of breadcrumbs. That is, if data is recovered at all.

Q. Why are forensic tools sometimes able to recover deleted data when other times they can't?

A. Some forensic preservations may not collect all relevant data, such as the temporary -WAL file or the information necessary to decrypt a database (the keys to the warehouse). This may be due to security features on the phone, investigator oversight, or legal limitations to the search.

Sometimes forensic tools don’t “know” how to find certain data. Depending on the app, messages may be stored in a different format than the tool expects. If a forensic expert is working on the case, they can attempt manual forensic analysis, using software and investigation methods in addition to the automated capabilities built into a forensic tool. This may unearth more deleted data in screenshots, logs, app notifications, unallocated space, other devices, or copies of data in the cloud.

Future software updates to forensic tools generally improve their capabilities. This may enable the recovery of more data by automated methods – a final consideration in determining whether that deleted message can be found.

Allison Young, Digital Forensics Analyst

Upcoming Events

September 9-11, 2025

Amped User Days (Amped Software) (Virtual)

UC Berkeley Law AI Institute (Berkeley, CA and Virtual)

September 10, 2025

Protecting Against Scams and Data Breaches (NYCLA) (Virtual)

September 17, 2025

AI in Philly: How artificial intelligence is secretly shaping our city (Philly Tech Justice Collective) (Virtual)

September 24, 2025

Digital Witness: The Legal Frontier of AI Evidence (ABA) (Virtual)

Truth or Tech? Navigating AI-Generated Evidence in the Courtroom (NACDL) (Virtual)

September 25, 2025

Policing in the Age of AI: Benefits, Risks, and the Need for Transparency (Policing Project at NYU Law School) (Virtual)

October 2-3, 2025

NYSDA’s Fall Forensics Conference: Evidence Unlocked (NYSDA) (Albany, NY)

October 13-14, 2025

Artificial Intelligence and Robotics National Institute (ABA) (Santa Clara, CA)

October 15, 2025

Ethically Responsible Use of AI in Practice (NYSBA) (Virtual)

October 21-23, 2025

Legacy & Logic: 25 Years of Digital Discovery (Oxygen Forensics) (Orlando, FL)

October 27-29, 2025

Techno Security & Digital Forensics Conference West (San Diego, CA)

October 27-31, 2025

36th Annual LEVA Training Symposium (Coeur d’Alene, ID)

October 29, 2025

Understanding AI: Reorienting AI for the Public Interest (Data & Society and The NY Public Library) (New York, NY and Virtual)

November 16-22, 2025

SANS DFIRCON Miami 2025 (Coral Gables, FL)

November 20, 2025

Understanding AI: Standing Up for Human Value in the AI Economy (Data & Society and The NY Public Library) (New York, NY and Virtual)

April 23-25, 2026

19th Annual Making Sense of Science: Forensic Science, Technology & the Law (NACDL) (Las Vegas, NV)

Small Bytes

Digital IDs Put Health Care Privacy at Risk (Convergence Magazine)

Americans, Be Warned: Lessons From Reddit’s Chaotic UK Age Verification Rollout (EFF)

What Does Palantir Actually Do? (Wired)

Government Documents Show Police Disabling AI Oversight Tools (Mother Jones)

How Tea’s Founder Convinced Millions of Women to Spill Their Secrets, Then Exposed Them to the World (404 Media)

Home Depot Sued for ‘Secretly’ Using Facial Recognition Technology on Self-Checkout Cameras (PetaPixel)

We must fight age verification with all we have (User Mag)

Citizen Is Using AI to Generate Crime Alerts With No Human Review. It’s Making a Lot of Mistakes (404 Media)

A Teen Was Suicidal. ChatGPT Was the Friend He Confided In. (NY Times)

OpenAI Says It's Scanning Users' ChatGPT Conversations and Reporting Content to the Police (Futurism)

Unfortunately, the ICEBlock app is activism theater (Micah Lee)

Ice obtains access to Israeli-made spyware that can hack phones and encrypted apps (The Guardian)