Post-Roe Digital Privacy, Surveillance Profiteering, Cryptocurrency, EXIF data, & More

Vol. 3, Issue 6

June 6, 2022

Welcome to Decrypting a Defense, the monthly newsletter of the Legal Aid Society’s Digital Forensics Unit. Shane Ferro highlights the role of digital forensics in a post-Roe world. Diane Akerman discusses the misuse of civil asset forfeiture funds for surveillance. Benjamin Burger reviews a recent court decision debunking some cryptocurrency myths. Examiner Chris Pelletier explains how metadata relates to digital videos and images.

The Digital Forensics Unit of the Legal Aid Society was created in 2013 in recognition of the growing use of digital evidence in the criminal legal system. Consisting of attorneys and forensic analysts and examiners, the Unit provides support and analysis to the Criminal, Juvenile Rights, and Civil Practices of the Legal Aid Society.

In the News

Spotlight on Digital Privacy in a Post-Roe World

Shane Ferro, Digital Forensics Staff Attorney

It’s June and we are inching closer to the day that Justice Alito’s leaked draft decision in Dobbs likely gets released, overturning Roe and the constitutional right to access abortion in America.

Once the initial shock of the leaked decision faded, the focus shifted to the immediate implications of life in a post-Roe America. At some point this month, it’s almost certain that it will become illegal to get an abortion in much of the South, large parts of the Midwest, and a handful of other states. Healthcare clinics will cease to exist, people will be forced to carry their pregnancies to term, and some will almost certainly die.

Many of the states that outlaw abortion will also criminalize it (or already have), making it all the more important that people throughout the country are able to access information and seek out healthcare privately and without government interference. Unfortunately, digital evidence is likely to play a major role in the prosecution of women for seeking abortions, or the providers that perform them.

Seemingly overnight, major media outlets were churning out servicey articles about protecting digital privacy and giving a platform to advocates who have been arguing for greater restrictions on government surveillance for years. The leaked opinion served as a momentary wake-up call, focusing mass media attention on the fact that our digital world makes it much too easy for the government to conduct dragnet surveillance and track everything from your search history to your recent location data to your private texts and phone calls.

A number of resources have popped up across the internet over the last month focusing specifically on helping those who may be considering an abortion, notably guides from the Electronic Frontier Foundation and the Digital Defense Fund (also available in Spanish).

Much of the content underlines something that we know well here at DFU: it is incredibly difficult and time consuming for a person to completely protect their digital privacy on their own, without legislation that prevents companies from selling it or the government from accessing it. It means, in part, living with reduced functionality on your phone by turning location settings off, potentially disconnecting completely from Bluetooth, being constantly vigilant about the security of the browser you are using to search, having multiple email addresses, eschewing your phone’s built-in calling and texting capabilities in favor of more secure or anonymous apps, and knowing the minute details of the settings of each app on your phone.

It’s exhausting even for those who know every step they need to take, and likely close to impossible for someone living with the stress of an unwanted or dangerous pregnancy in a state that has banned the healthcare they need.

Surveilling for Profit

Diane Akerman, Digital Forensics Staff Attorney

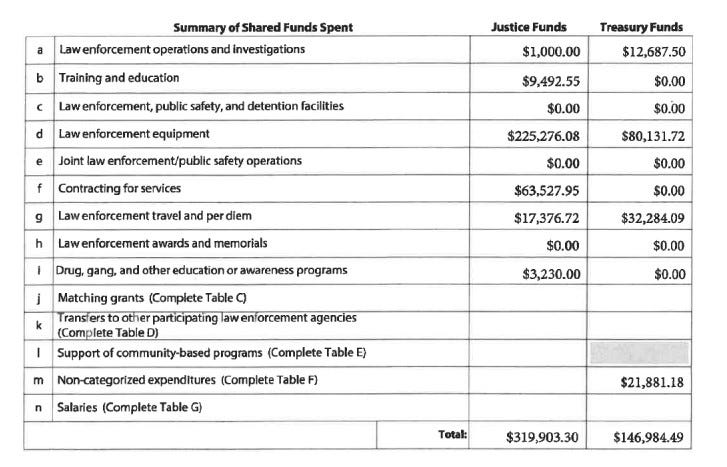

Previous records revealed that the Staten Island DA’s office contracted with Clearview AI for use of their services. More recent documents have now uncovered that funding for the contract came from The Federal Equitable Sharing Program (FESP), a vast federal asset forfeiture program that allows local municipalities access to seized funds in exchange for cooperation and assistance in investigation.

Asset forfeiture programs have been under increased scrutiny as funds and property are often seized regardless of conviction, and the process notoriously difficult to fight. The practice is widely dubbed “policing for profit” – incentivizing local law enforcement agencies to seize funds without due process, without actually accomplishing its intended purpose.

States and localities have introduced reforms to place limitations on the abuses of civil asset forfeiture programs, but participation in the FESP allows local law enforcement to circumvent those restrictions. Last year, the House Committee on Oversight and Reform held a hearing addressing many problems with civil asset forfeiture practices, and in a recent letter to the DOJ [PDF], a bi-partisan group of Senators expressed concern about abuses of the FESP.

Among the concerns was the lack of adequate oversight of agencies participating in the FESP, resulting in frivolous purchases and the documents disclosed pursuant to the FOIL underscore that concern. In their annual certification, RCDA was not required to provide any specifics about the use of hundreds of thousands of dollars of funds. The certification form provides a number of general categories, but requires no further clarification or details. There is no way to determine from the documentation how the RCDA categorized the use of funds for the contract with Clearview AI.

Frivolous purchases are one problem, but using the funds for more nefarious purposes, such as surveilling the community you claim to protect and represent, is quite another. The documents show the the technology was used [PDF] in a variety of ways – from the wholly unnecessary to the deeply problematic. In some cases, the program was used to search for social media of already known individuals (rather than identifying an unknown individual), seemingly serving little investigative function. A handwritten note on another case reading “deportation case” is especially alarming, as immigration cases are not within the purview of a local DA’s office, especially one located within a sanctuary city.

Communities suffering from the devastating consequences of over-policing, are under attack from all sides: surveilled, harassed, arrested, prosecuted, and convicted at higher rates, their property seized, and then used to pad the coffers of local law enforcement, including District Attorneys’ offices, to buy more equipment, increasingly invasive technology, and personnel power to ensure the cycle of oppression continues.

And no one polices the police.

In the Courts

Cryptocurrency and Blockchain Tracing

Benjamin Burger, Digital Forensics Staff Attorney

Cryptocurrency and blockchain technology have become commonplace in American society. People trade cryptocurrencies, like BitCoin and Ethereum, and collect non-fungible tokens (NFTs). However, an even larger portion of the public has no idea what these technologies are and, more importantly, what they even do. Setting aside the numerous ethical issues surrounding these technologies, it is important for criminal defense attorneys to have a basic knowledge of cryptocurrency and how it can present in a case.

This month, United States Magistrate Judge Zia M. Faruqui unsealed a decision, In re Criminal Complaint, 22-mj-067 (D.D.C. May 13, 2022) [PDF], holding that the government had probable cause to issue a criminal complaint charging an unnamed individual with operating a payments platform with the intention of circumventing United States sanctions law. The decision is heavily redacted; however, it provides an interesting explanation of how the federal government investigates crimes involving cryptocurrencies and the blockchain.

One point that Magistrate Judge Faruqui makes in the decision is that most cryptocurrency, like Bitcoin, is not anonymous. In fact, this is the biggest myth about cryptocurrency. Every Bitcoin transaction is publicly available on the blockchain, going back to the beginning of Bitcoin. Think of the blockchain as a bank account ledger, in which all transactions are recorded. Unlike your checking account, in which the ledger of transactions is held and verified solely by your bank, the Bitcoin ledger - the blockchain - is decentralized. That means that a complete copy of every Bitcoin transaction is stored on computers across the world. The blockchain records every transaction, including the amount of money transferred, the time, and the wallets (or accounts) that the money was sent to and received from. Anyone can review the transactions made by a Bitcoin wallet that sent or received money.

Although the owner of a cryptocurrency wallet may be anonymous, interacting with the blockchain inevitably requires providing identifying information. Virtual currency exchanges (VCE), like Coinbase, require know-your-customer (KYC) information when opening an account. Even if an individual would like to make anonymous cryptocurrency transactions, their identity will be known to the cryptocurrency exchange. Similarly, the IP address used to establish and access the exchange account will also be known to the VCE. The government can subpoena the KYC and IP address information from the VCE to identify - and charge - the person conducting criminal cryptocurrency transactions.

Although there are more sophisticated ways of hiding cryptocurrency transactions, the reality is that many people, including users, do not understand the limitations of cryptocurrency and the blockchain.

Ask an Examiner

Do you have a question about digital forensics or electronic surveillance? Please send it to AskDFU@legal-aid.org and we may feature it in an upcoming issue of our newsletter. No identifying information will be used without your permission.

Q. I have a case involving digital photos and I want to know what information I can get from the metadata? Is this the same as EXIF data?

A. All digital files contain information called “metadata.” This metadata includes information like the file name, the size of the file, when it was created and last updated, and other data related to the file itself. When we use the term “metadata,” we are referring to this information.

Video and image files, like digital photos, have a specific type of metadata called EXIF (Exchangeable Image File Format) data. When a video or digital photo is created on a device, the smartphone or camera used to create the image adds EXIF data to the created file. This EXIF data contains information specific to video and image files, like the make and model of the camera or phone, the date the picture or video was created, the location where the picture or video was created (if recorded by a smartphone or camera with location information enabled), the resolution of the picture or video, and the camera settings used (such as shutter speed, aperture, ISO, exposure, focal length, metering mode, flash, and other pieces of information that would be useful only to photographers).

Not every picture or video file will contain all of this EXIF data. For example, a photo created on a smartphone with location services disabled will not contain EXIF data reflecting the location of the image. Also, EXIF data can be changed, edited, or lost. This usually happens when a file is moved, copied, or transferred between devices, and can result in some of the EXIF data changing in a way that renders it unreliable. Moreover, when a picture or video is uploaded to a social media account, most of the EXIF data is usually stripped from the image due to privacy concerns. If you are concerned about the integrity of the EXIF data in a picture or video, then the file should be acquired and preserved from the original device it was created on. This allows a digital forensics examiner to render a sound opinion about the EXIF data.

- Chris Pelletier, Digital Forensics Examiner

Upcoming Events

June 6-10, 2022

RightsCon (Virtual)

July 22-24, 2022

A New HOPE (Hackers on Planet Earth) (Queens, NY)

August 11-14, 2022

DEF CON 30 (Las Vegas, NV)

September 7, 2022

Intro To Artificial Intelligence (AI) Part 2: AI As A Litigation Tool (NYSBA) (Virtual)

September 23-25, 2022

D4BL III (Data for Black Lives) (New York, NY)

October 10-12, 2022

Techno Security & Digital Forensics Conference (San Diego, CA)

Small Bytes

An algorithm that screens for child neglect raises concerns (AP News)

After years of gang list controversy, the NYPD has a new secret database. It’s focused on guns. (Gothamist)

Eric Adams Wants Weapons Detectors in the Subway. Would That Bring Safety or “Absolute Chaos”? (New York Focus)

Tech giants pledge support to ban controversial search warrants (TechCrunch)

Human rights groups demand Zoom stop any plans for controversial emotion AI (Protocol)

San Francisco Police Are Using Driverless Cars as Mobile Surveillance Cameras (vice.com)

U.S. cities are backing off banning facial recognition as crime rises (Reuters)

The drawbacks of high-tech emergency response (Vox)

FBI Provides Chicago Police With Fake Online Identities for “Social Media Exploitation” Team (The Intercept)

FBI Wiretap Opens Window To Murderous Drug Gang - And A Crucial Flaw In Snapchat Privacy (Forbes)